Highly scalable and standards based

Model Inference Platform on Kubernetes

for Trusted AI

Why KServe?

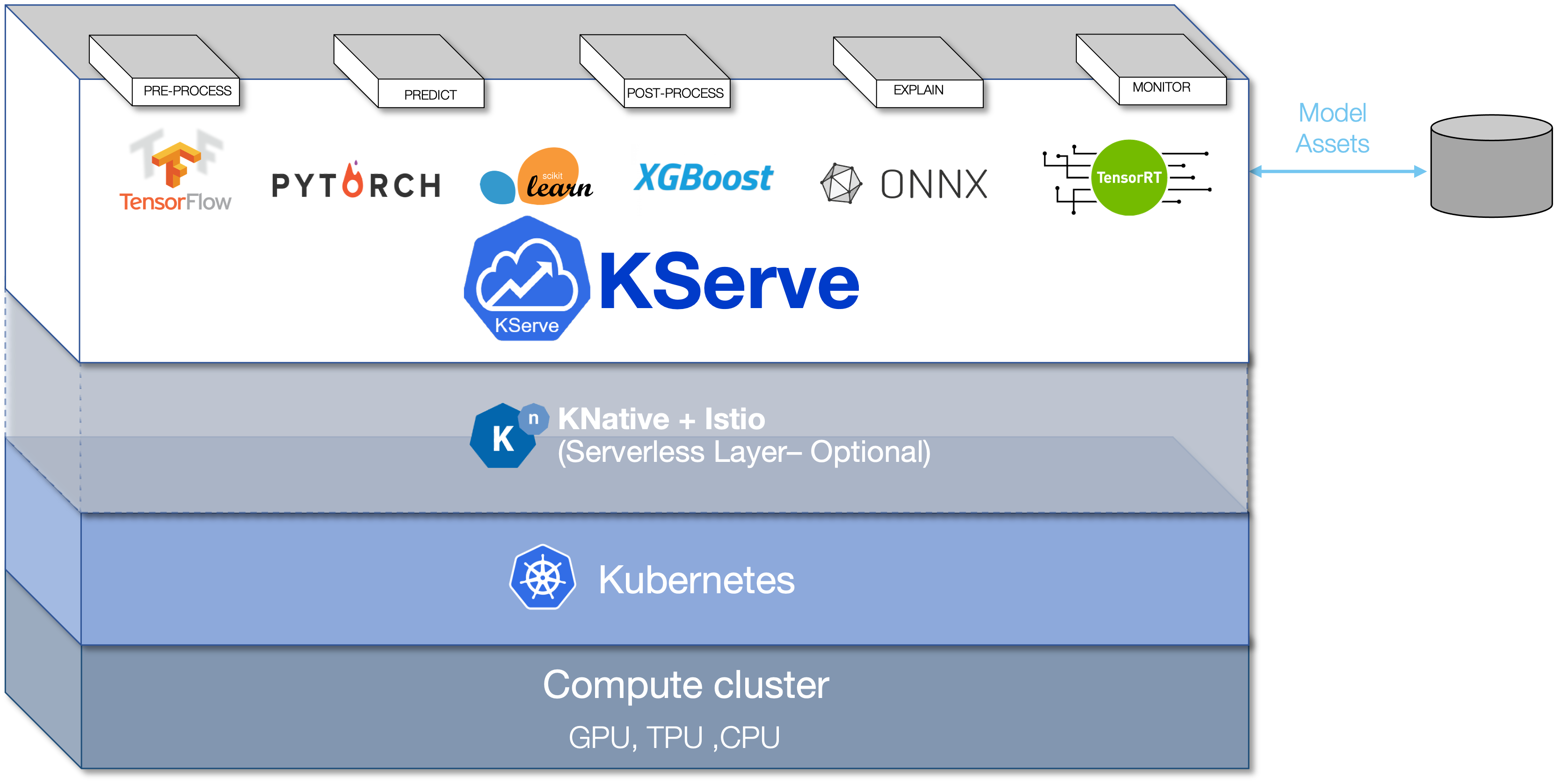

- KServe is a standard Model Inference Platform on Kubernetes, built for highly scalable use cases.

- Provides performant, standardized inference protocol across ML frameworks.

- Support modern serverless inference workload with Autoscaling including Scale to Zero on GPU.

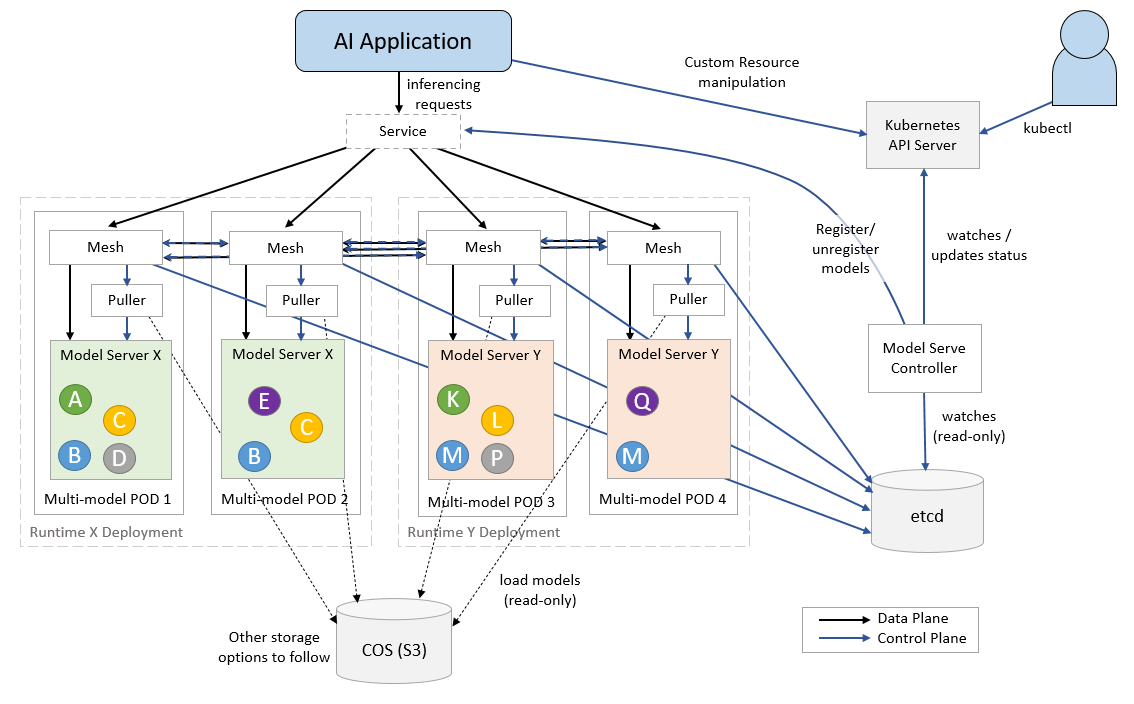

- Provides high scalability, density packing and intelligent routing using ModelMesh

- Simple and Pluggable production serving for production ML serving including prediction, pre/post processing, monitoring and explainability.

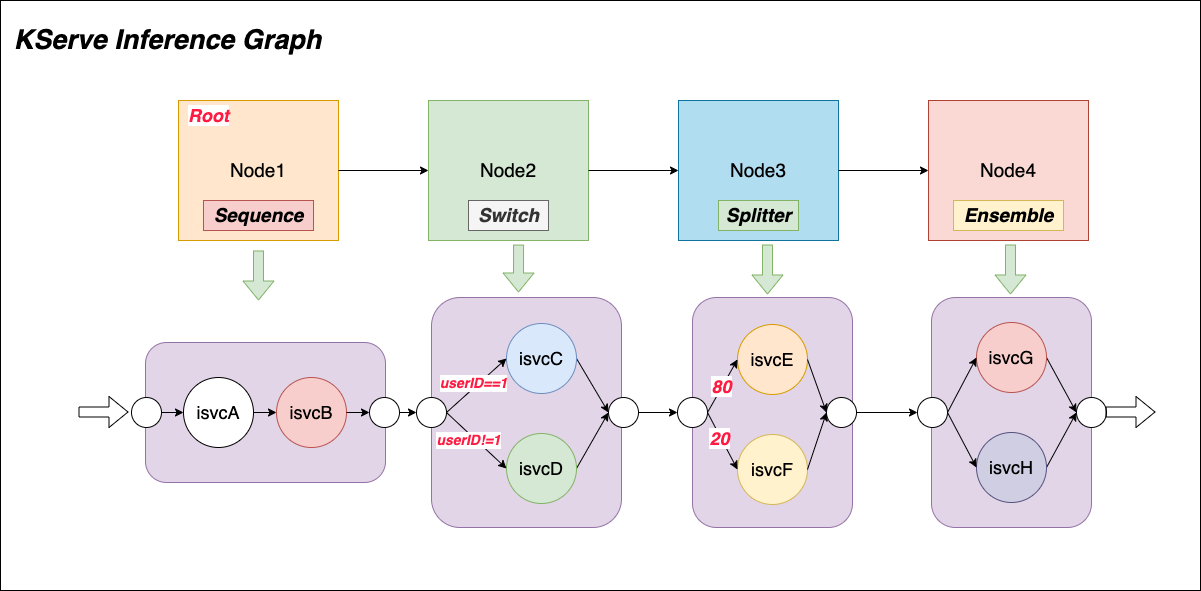

- Advanced deployments with canary rollout, experiments, ensembles and transformers.

KServe Components

Provides Serverless deployment of single model inference on CPU/GPU for common ML frameworks

Scikit-Learn, XGBoost, Tensorflow, PyTorch as well as pluggable custom model runtime.

ModelMesh is designed for high-scale, high-density and frequently-changing model use cases. ModelMesh intelligently loads and unloads AI models to and from memory to strike an intelligent trade-off between responsiveness to users and computational footprint.

Provides ML model inspection and interpretation, KServe integrates Alibi, AI Explainability 360,

Captum to help explain the predictions and gauge the confidence of those predictions.

Enables payload logging, outlier, adversarial and drift detection, KServe integrates Alibi-detect, AI

Fairness 360, Adversarial Robustness Toolbox (ART) to help monitor the ML models on production.

KServe inference graph supports four types of routing node: Sequence, Switch, Ensemble, Splitter.

Adopters

..and more!